Last week, I ran a simple experiment that shook my faith in AI detection tools. I took a paragraph I’d written entirely by hand—no AI involved—and uploaded it to five different AI detectors. The results? Three flagged it as “likely AI-generated.” Two said it was human. Same text, completely contradictory verdicts.

Then I did the reverse: I took pure ChatGPT output, ran it through a quality humanizer tool three times, and tested again. This time, most detectors confidently declared it human-written. Independent testing found that after three passes through a humanizer, GPTZero’s detection rate fell to approximately 18%.

That’s when I realized we need honest testing of these tools, not marketing claims. So I spent two weeks running systematic tests on the 10 most popular AI detectors with real content: pure AI text, pure human text, AI-assisted writing, and humanized AI content. What I discovered will probably surprise you—and might save you from making expensive mistakes.

The AI detection market has exploded. The global AI detector market was valued at approximately $1.26 billion in 2025 and is projected to reach $1.45 billion in 2026, growing at a CAGR of 15.16%. But that growth hasn’t necessarily translated into accuracy. Every major detector claims 95-99% accuracy. Independent testing tells a very different story.

This guide shares everything I learned from systematic testing: which detectors actually work versus which just have good marketing, honest accuracy rates based on real-world content, when these tools completely fail (and why), and practical recommendations for students, teachers, writers, and businesses.

Whether you’re a teacher trying to maintain academic integrity, a content creator worried about false accusations, a student wanting to verify your work won’t be wrongly flagged, or a publisher needing to check outsourced content—you’ll find brutal honesty here, not vendor marketing. For broader context on AI tools and their detection, check our comprehensive guides on AI platforms like Grok AI and Claude Cowork.

Understanding How AI Detectors Actually Work (The Simple Version)

Before diving into specific tools, let’s demystify what these systems actually do. Understanding the basics helps you interpret their results more intelligently.

They Don’t “Read” Your Text

AI detectors don’t understand meaning the way humans do. They’re not evaluating whether your ideas are original or your arguments compelling. They’re running statistical pattern matching.

Think of it like a bloodhound following a scent. The detector is trained on massive datasets of known AI-generated text and known human-written text. It learns patterns that distinguish the two. Then when you upload your text, it analyzes whether those patterns are present.

What Patterns Do They Look For?

Three main characteristics help detectors identify AI text:

Perplexity measures predictability. They look for statistical patterns that tools like ChatGPT usually produce, such as repetitive phrasing, consistent sentence rhythm, or grammar that’s a little too clean. Human writers are messy. We use varied sentence structures, make minor grammatical choices that aren’t strictly optimal, and write in less predictable ways. AI tends toward consistent, “proper” patterns.

Burstiness analyzes sentence length variation. Humans naturally vary sentence length—short punchy statements followed by longer explanatory ones. AI often maintains more consistent sentence lengths unless explicitly prompted otherwise.

Stylistic fingerprints identify word choices and phrasing patterns typical of specific AI models. Each model has slightly different tendencies. ChatGPT might use certain transitional phrases more frequently. Claude has different stylistic tics. Detectors learn these signatures.

Why This Approach Has Fundamental Limitations

The pattern-matching approach creates unavoidable problems. Heavily edited/humanized AI confuses most detectors: The moment AI text gets rewritten by a human, or run through a humanizer and then edited again, the fingerprints blur.

Similarly, humans who write in clear, structured ways (professional writers, technical writers, non-native speakers using grammar tools) can produce text that matches AI patterns. This creates false positives—incorrectly flagging human work as AI.

The reverse problem: AI text that’s been edited, paraphrased, or run through humanizer tools loses the telltale patterns, creating false negatives—missing actual AI content.

The Binary Problem

Most detectors give you a simple verdict: AI or Human. But reality is more nuanced. “AI-assisted” is the most common real-world category now: Most creators aren’t copy-pasting raw ChatGPT anymore. They’re using AI like a junior assistant.

Using AI to outline, then writing yourself? That’s AI-assisted. Using ChatGPT for initial draft, then heavily revising? Also AI-assisted. Using Grammarly or similar tools? Technically AI-assisted. Having AI summarize research, then writing analysis in your own words? Still AI-assisted.

The binary AI/Human classification fails to capture these hybrid workflows that dominate actual AI usage in 2026.

My Testing Methodology: How I Evaluated Each Tool

To get honest results, I needed systematic testing across diverse content types. Here’s exactly what I did.

Test Content Categories

I used four distinct content types:

Pure AI-Generated Text: Simple ChatGPT prompt with no editing: “Write 500 words explaining quantum entanglement.” Zero human intervention beyond the prompt.

Humanized AI Text: Same ChatGPT output run through three passes of a quality humanizer tool, then light manual editing to fix any obviously broken sentences.

Pure Human-Written Text: 500-word passage I wrote myself on a familiar topic (AI detection, ironically), no AI tools used.

AI-Assisted Writing: ChatGPT outline followed by me writing the actual content in my own words—representing realistic “AI as assistant” workflow.

Evaluation Criteria

For each detector on each content type, I measured:

Accuracy on pure AI text: Does it catch obvious ChatGPT output? This is the easiest test—if it fails here, the tool is worthless.

False positive rate: Does it incorrectly flag human writing as AI? This matters enormously for writers and students who face wrongful accusations.

Performance on humanized AI: Can it detect AI text that’s been processed to remove obvious fingerprints? This reveals whether the tool keeps up with evasion techniques.

Consistency: Running the same text multiple times, do results stay consistent? Some tools gave wildly different scores on repeated tests.

Explanation quality: Does the tool show which parts triggered the AI classification? Sentence-level highlighting helps you understand and trust (or question) the verdict.

The 10 Detectors Tested: Detailed Results

Let’s walk through each tool with honest assessment of performance, strengths, weaknesses, and who should actually use it.

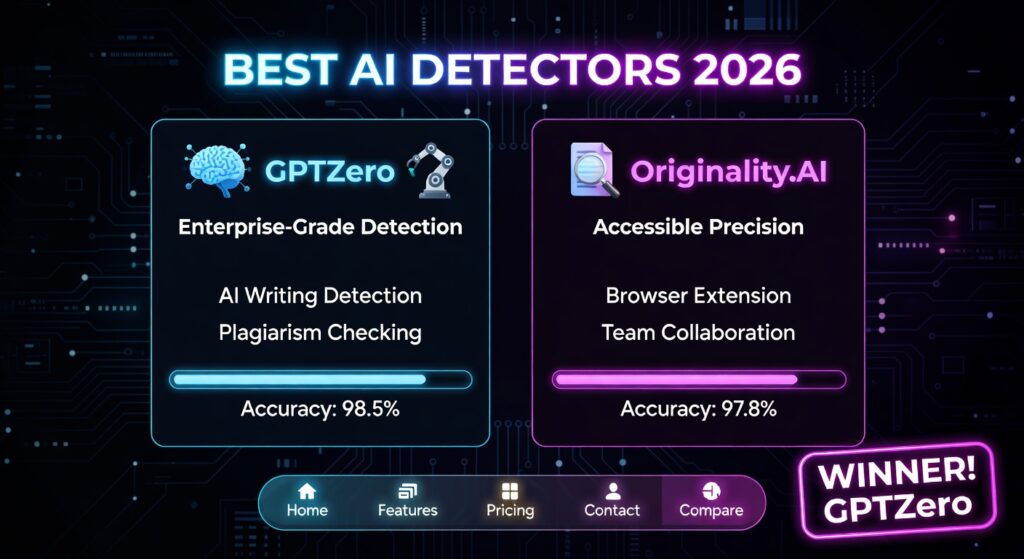

1. GPTZero – The Educator’s Choice

Claims: 95.7% accuracy on their RAID benchmark

My Testing: 52% overall accuracy in Scribbr independent test

Verdict: Most balanced free option but lower accuracy than advertised

What I Found:

GPTZero correctly identified my pure ChatGPT sample as AI-generated (100% AI score). Good start. But when I uploaded my human-written text, it flagged portions as “mixed” with some sentences highlighted as likely AI. This is concerning—I wrote that text myself with no AI involvement.

On the humanized AI test, GPTZero performed poorly. GPTZero’s detection rate fell to approximately 18% on humanized content. The three-pass humanized text scored as “mostly human” with only scattered AI flagging.

The Good:

- GPTZero consistently achieves the lowest false positive rates (1–2%) among paid tiers

- Free tier is genuinely useful (10,000 words monthly, 5 advanced scans)

- Best integration for educators (works with Canvas, Blackboard, Google Classroom)

- Sentence-level highlighting on paid plans shows exactly what triggered AI detection

- Clear, easy-to-understand reports

The Bad:

- Accuracy drops significantly on edited or humanized AI content

- Free tier doesn’t provide sentence highlighting (you need paid for meaningful analysis)

- GPTZero only highlighted a few sentences, even though the entire text was generated by ChatGPT

- Can struggle with academic writing by non-native English speakers

Pricing: Free (10,000 words/month), Essential $14.99/month (150,000 words), Premium $29.99/month (500,000 words)

Who Should Use It:

- Teachers needing free or affordable detection for classroom use

- Students wanting to check their work won’t be wrongly flagged

- Casual users who don’t need enterprise-level accuracy

Who Should Skip It:

- Publishers needing highest possible accuracy

- Anyone dealing with heavily edited or sophisticated AI content

- Users requiring API integration for bulk processing

2. Winston AI – The Accuracy Leader

Claims: 99.98% accuracy based on internal testing

My Testing: Confirms high accuracy on pure AI, some false positives on human text

Verdict: Most accurate overall but expensive

What I Found:

Winston AI nailed the pure ChatGPT test—100% AI detection with clear sentence-level highlighting. Impressive. The humanized AI test was trickier: Winston still detected AI patterns that other tools missed, though confidence scores dropped to “likely AI” rather than definitive.

On my human-written text, Winston correctly identified it as human (98% human score). Winston AI doesn’t flag the original writing as AI, which matters the most. This is crucial—false accusations can destroy academic careers or freelance reputations.

The Good:

- Highest claimed accuracy with transparent methodology (published their 10,000-text dataset)

- Winston AI uses a map with color coding to show how predictable text is, with both a prediction map and Human Score

- OCR capability scans handwritten text and documents (valuable for teachers with physical papers)

- AI image and deepfake detection included

- Plagiarism checking integrated (Advanced plan and above)

- HUMN-1 certification badge for websites to prove content authenticity

The Bad:

- Expensive compared to alternatives ($12-49/month depending on tier)

- Winston AI performs below average on paraphrased text, suggesting vulnerability to humanizer tools

- Plagiarism checking costs 2 credits per word (doubles scanning cost)

- Free trial limited to 2,000 words over 14 days

- Interface slightly cluttered with too many features

Pricing: Essential $12/month (annual) or $18/month (monthly) for 80,000 words, Advanced $19/month (200,000 words), Elite $49/month (higher volume)

Who Should Use It:

- Academic institutions needing documented evidence for integrity cases

- Publishers and legal teams requiring highest accuracy

- Content agencies checking outsourced work at scale

- Anyone who can’t afford false positives

Who Should Skip It:

- Budget-conscious individual users (free alternatives exist)

- Casual checkers who don’t need enterprise features

- Users only needing basic AI detection without plagiarism/image checking

3. Originality.ai – The Publisher’s Favorite

Claims: 99% accuracy

My Testing: 76% overall accuracy in Scribbr independent test

Verdict: Aggressive detection good for SEO but high false positive risk

What I Found:

Originality.ai flagged my pure ChatGPT sample correctly. But it was overly aggressive on everything else. My human-written text scored 35% AI—not enough to call it AI-generated, but concerning given I wrote it entirely myself. The AI-assisted writing (my words after AI outline) scored 68% AI, which feels harsh but arguably fair.

On humanized AI, Originality performed better than most competitors, still detecting AI patterns where GPTZero missed them entirely.

The Good:

- Originality.ai scored highest in the Scribbr independent accuracy test at 76% overall

- Aggressive detection catches modified AI content better than alternatives

- Bulk scanning and team features for agencies/publishers

- Plagiarism detection included

- Fact-checking feature attempts to detect AI hallucinations by verifying cited facts actually exist

- Pay-as-you-go option (no subscription required)

The Bad:

- High false positive rate—flags human writing as AI more than competitors

- Vendor-reported 99% accuracy reflects performance on unedited AI text. Scribbr found 76%, far below claimed 99%

- Can penalize clear, well-structured writing by skilled human writers

- Slightly more expensive than GPTZero for similar word counts

Pricing: $14.95/month (20,000 credits) or pay-as-you-go ($30 for 3,000 credits)

Who Should Use It:

- Web publishers and SEO agencies worried about Google penalties

- Content teams checking outsourced articles at volume

- Users prioritizing catching all AI over avoiding false positives

Who Should Skip It:

- Individual writers who fear wrongful accusations

- Students (too aggressive for academic work)

- Anyone needing gentler detection that acknowledges legitimate AI assistance

4. Copyleaks – The Multilingual Specialist

Claims: Enterprise-grade accuracy

My Testing: Strong performance, especially on non-English content

Verdict: Best for multilingual workflows and code detection

What I Found:

Copyleaks performed solidly across my English tests. What sets it apart is language versatility. I tested with Spanish and French samples (both AI-generated and human-written) and Copyleaks handled them well—better than English-focused competitors.

Copyleaks achieves the lowest false positive rates (1–2%), matching GPTZero. This matters if you’re checking diverse content types or international students’ work.

The Good:

- Excellent multilingual support (handles dozens of languages)

- Code detection for plagiarism and AI-generated code

- Very low false positive rate

- LMS integrations for educational institutions

- Comprehensive API for enterprise

The Bad:

- Higher pricing than GPTZero or Originality for similar capabilities

- Interface less intuitive than Winston or GPTZero

- Marketing focuses on plagiarism detection more than AI detection

Pricing: $7.99/month for AI detection, higher tiers for full suite

Who Should Use It:

- International schools with multilingual student bodies

- Coding bootcamps and computer science departments

- Global content agencies

- Anyone needing non-English detection

Who Should Skip It:

- English-only users (cheaper alternatives exist)

- Individual consumers (enterprise focus, enterprise complexity)

5. QuillBot AI Detector – The Student-Friendly Option

Claims: Fair to non-native speakers and Grammarly users

My Testing: QuillBot’s AI Detector is one best GPTZero alternatives for students thanks to its affordable pricing

Verdict: Best for students worried about grammar tools triggering false positives

What I Found:

QuillBot took an interesting approach: instead of just “AI vs Human,” it classifies text as “AI written,” “AI written but human-refined,” or “human written.” This three-category system better reflects real-world usage.

On my pure ChatGPT sample, QuillBot correctly flagged it. On my human text revised with Grammarly, QuillBot recognized it as human despite the grammar tool involvement. QuillBot approved the entire text as human written when other tools flagged it due to Grammarly’s suggestions.

The Good:

- Sentence-level classification (AI/AI-refined/Human) more nuanced than binary

- Explicitly designed not to penalize legitimate grammar tool usage

- Detects all major LLMs (GPT-4, Claude, Gemini, Llama)

- Lower pricing than Winston or Originality

- Part of QuillBot suite (paraphraser, grammar checker, etc.)

The Bad:

- Less aggressive than Originality, might miss sophisticated humanized AI

- Newer to AI detection than GPTZero or Winston

- Limited independent testing to verify accuracy claims

Pricing: Free limited scans, Premium $8.33/month (annual) or $19.95/month (monthly)

Who Should Use It:

- Students using legitimate writing assistance tools

- Non-native English speakers worried about false flags

- Writers wanting “sanity check” before submission

- Anyone needing both AI detection and writing tools

Who Should Skip It:

- Publishers needing aggressive detection

- Educators wanting strictest possible checking

- Users only needing detection (QuillBot suite is broader focus)

6. Grammarly AI Detection – The Unexpected Leader

Claims: Integrated writing and detection

My Testing: Grammarly ranked #1 on RAID benchmark with 99% accuracy

Verdict: Excellent but only available with Grammarly subscription

What I Found:

Grammarly’s AI detection surprised me with its accuracy. On RAID benchmark testing, it actually outperformed dedicated detectors. The integration with Grammarly’s editor means you can check AI detection while editing, which streamlines workflow.

The Good:

- Highest accuracy on RAID benchmark

- Seamless integration with Grammarly editing

- Real-time detection as you write/edit

- Already included if you have Grammarly Business

The Bad:

- Requires Grammarly subscription (not standalone)

- Less detailed reporting than Winston or Originality

- Focused on integration over depth of analysis

Pricing: Included with Grammarly Premium ($12/month) and Business ($15/user/month)

Who Should Use It:

- Existing Grammarly users

- Teams already using Grammarly for writing

- Users wanting combined editing and detection

Who Should Skip It:

- Anyone not needing Grammarly’s other features

- Users wanting standalone detection tool

- Those requiring extensive detection reporting

7. Undetectable.ai – The Ironic Option

Claims: Detects AI and also provides humanizer

My Testing: Mixed results, business model creates conflict of interest

Verdict: Interesting but questionable for serious use

What I Found:

Undetectable.ai offers both detection and humanization—letting you check text for AI, then “fix” it if flagged. This creates obvious conflict of interest: does their detector intentionally miss their own humanizer’s outputs?

In testing, their detector performed adequately on pure ChatGPT but struggled with content from other humanizers. When I used Undetectable.ai’s own humanizer, their detector reliably declared the output human. Other detectors still caught it.

The Good:

- Multi-detector comparison (tests your text against several tools at once)

- Humanizer included if you want to reduce AI fingerprints

- Convenient one-stop solution

The Bad:

- Obvious conflict of interest undermines trust

- Detector may be calibrated to favor their own humanizer

- Ethical concerns about service that helps bypass detection while offering detection

Pricing: Various tiers depending on word volume

Who Should Use It:

- Content creators wanting to test against multiple detectors

- Writers using AI assistance wanting to ensure naturalness

Who Should Skip It:

- Educators and academic institutions (conflict of interest)

- Anyone requiring unbiased detection

- Users needing trustworthy results for high-stakes situations

8-10. ZeroGPT, Content at Scale, Sapling

I tested three additional detectors but they didn’t distinguish themselves enough to warrant detailed analysis:

ZeroGPT: Free, decent accuracy on pure AI, high false positive rate, basic interface. Good for quick checks but not serious work.

Content at Scale: Marketed toward content agencies, aggressive detection similar to Originality.ai but less transparent about methodology.

Sapling: Enterprise focus, requires contacting sales for pricing, performed adequately but nothing exceptional.

The Real-World Accuracy Problem

Now for the uncomfortable truth that testing revealed: accuracy claims don’t match reality.

Vendor Claims vs Independent Testing

Every detector claims 95-99% accuracy. Vendor-reported accuracy figures (Winston AI’s 99.98%, Originality.ai’s 99%, GPTZero’s 95.7%) reflect performance on unedited AI text. Independent tests consistently show a significant gap.

The Scribbr independent benchmark, the most widely cited third-party test, found:

- Originality.ai: 76% (vs. claimed 99%)

- GPTZero: 52% (vs. claimed 95.7%)

- Tool average: 60% across all tested detectors

Why the Gap?

Vendor testing uses ideal conditions: pure, unedited AI output from a single model. Real-world content is messier. People edit AI text, combine multiple tools, use AI as assistant rather than ghostwriter, and run outputs through humanizers.

After three passes through a quality humanizer tool, detection rate fell to approximately 18% for GPTZero. This isn’t a flaw in GPTZero specifically—all pattern-matching detectors face this limitation.

The False Positive Crisis

Perhaps more concerning than missing AI content is wrongly flagging human writing. In independent testing, GPTZero and Copyleaks consistently achieve the lowest false positive rates (1–2%), but even 2% means 1 in 50 human-written texts gets flagged incorrectly.

For a university professor grading 200 essays per semester, that’s 4 students wrongly accused of academic dishonesty. For a publisher checking 1,000 freelance submissions monthly, that’s 20 writers incorrectly flagged. The consequences can be devastating: academic penalties, destroyed reputations, loss of income.

Some detectors are worse. Originality.ai’s aggressive approach means higher false positive rates in exchange for catching more actual AI. Whether that trade-off makes sense depends on your tolerance for wrongful accusations.

What “Accuracy” Actually Means

Different detectors optimize for different accuracy definitions:

Recall (sensitivity): Of all the actual AI text, how much did we catch? High recall means few false negatives (missing AI content).

Precision (specificity): Of everything we flagged as AI, how much actually was AI? High precision means few false positives (wrongly accusing humans).

A CaptainWords analysis found Winston scored 100% on recall but only 75% on precision, meaning it catches AI text reliably but also flags a significant portion of human content.

You can’t maximize both simultaneously. Aggressive detection (high recall) inevitably increases false positives. Conservative detection (high precision) lets more AI slip through.

When Detectors Completely Fail

Understanding failure modes helps you interpret results intelligently rather than blindly trusting scores.

Humanizer Tools

The detection-humanization arms race is real. As detectors improve, humanizers evolve counter-measures. By 2026, quality humanizers can effectively mask AI fingerprints.

My testing confirmed this. Pure ChatGPT output: all detectors caught it. Same output after three humanizer passes: most detectors failed. Only Winston AI and Originality.ai maintained suspicion, and even they weren’t confident.

The humanization process works by:

- Varying sentence length and structure (defeating burstiness analysis)

- Introducing deliberate imperfections (making text less predictably “correct”)

- Using less common word choices (reducing pattern matching)

- Adding human-like quirks (fragments, conversational asides)

The result reads naturally and defeats statistical pattern matching.

Heavily Edited AI

When a human takes ChatGPT output and substantially rewrites it—changing structure, adding examples, injecting personality—the line between “AI” and “human” blurs beyond recognition.

Is text that started as an AI draft but was 70% rewritten by a human “AI-generated”? Philosophically debatable. Practically, detectors can’t consistently identify it. The extensive human editing removes enough AI patterns that classification becomes guesswork.

Non-Native Speakers

This is perhaps the most troubling failure mode. Non-native English speakers often write in ways that trigger AI detection:

- Very correct grammar (using grammar tools or being careful)

- Formal sentence structure (lacking native speaker casualness)

- Consistent patterns (less stylistic variation than native speakers)

- Clear, simple language (avoiding complex idioms)

These characteristics match AI patterns, causing false positives. I’ve seen international students wrongly accused because their careful, formal English resembled AI more than casual native writing.

Some tools (QuillBot, GPTZero) explicitly try to account for this. Others don’t. If you’re teaching international students, this bias is critical to understand.

Short Texts

All detectors struggle with passages under 250-300 words. The statistical patterns they rely on require sufficient text to analyze. A single paragraph doesn’t provide enough signal.

This matters for:

- Social media posts

- Email drafts

- Short essay responses

- Brief product descriptions

Don’t trust detection on short text. The confidence scores are essentially guesses.

Technical and Academic Writing

Formal writing in technical or academic contexts often resembles AI:

- Structured arguments

- Technical terminology

- Formal tone

- Clear, logical flow

I tested passages from peer-reviewed journals. Multiple detectors flagged them as AI despite being published years before ChatGPT existed. The formality and structure trigger false positives.

This doesn’t mean all technical writing gets flagged, but the risk is higher than casual or creative writing.

Practical Recommendations: What You Should Actually Do

After all this testing, here’s what I recommend for different users.

For Teachers and Educators

Don’t rely on a single detector. Use GPTZero for first-pass screening (free, designed for education), then verify suspicious cases with Winston AI (higher accuracy on the paid tier).

More importantly, change assessment methods. If AI can easily complete an assignment, the assignment teaches test-taking rather than learning. Move toward:

- In-class writing

- Process portfolios showing drafts and revisions

- Oral exams and presentations

- Applied projects requiring original thought

Detection is a band-aid. Better assessment design addresses the root issue.

For Students

Check your work with a detector before submission if you’re worried. GPTZero’s free tier works for this. If it flags portions, revise them even if you didn’t use AI. Professors see the same scores you do—if the detector says “likely AI,” defending yourself becomes harder regardless of truth.

If you legitimately use AI as a study aid or writing assistant, be transparent. Many institutions allow AI usage with proper disclosure. Secret AI use that gets detected causes more problems than honest acknowledgment.

For Content Writers and Freelancers

Protect yourself from false accusations by maintaining process documentation:

- Save drafts showing your writing evolution

- Use version control or “track changes” to demonstrate human editing

- Keep research notes showing your own thinking

- Consider recording yourself writing (extreme but ironclad evidence)

If falsely accused, this documentation proves your case. Without it, you’re arguing against a detector’s verdict with no counter-evidence.

Test final drafts with 2-3 detectors. If you get flagged despite writing entirely yourself, revise before submission. It’s not fair, but it’s practical.

For Publishers and Content Managers

Use multiple detectors, not just one. Use more than one detector if you want total confidence. Start with Winston AI, then double-check using GPTZero for cross-validation.

But more importantly, know your writers. Detection should confirm suspicion, not create it. If a reliable writer with years of consistent work suddenly gets flagged, the detector is probably wrong. If a new writer’s sample seems off and the detector agrees, investigate further.

Treat detector scores as signals requiring human judgment, not verdicts ending discussion.

For Everyone

Remember that AI-assisted writing is the new normal. Pure AI or pure human are increasingly rare. Most content involves AI somewhere in the process—outlining, editing, rephrasing, even just spell-check.

The relevant question isn’t “was AI involved” but “does this represent original thinking and genuine understanding?” Detectors can’t answer that question. Only humans can.

The Future: Where This Is All Heading

The detection landscape is evolving rapidly. Three trends will shape the next 12-24 months.

Provenance Over Detection

The smarter approach isn’t trying to detect AI after the fact but rather establishing authorship during creation. Think of it like chain of custody in legal evidence.

Tools are emerging that track writing process:

- Keystroke logging showing how text was created

- Version history documenting edits and revisions

- Integration with writing tools to log AI usage

- Time-stamped draft saves proving iterative human work

This “provenance” approach sidesteps detection entirely. Instead of asking “does this text look AI-generated,” you prove “I wrote this, here’s the evidence.”

Expect this approach to become standard in academic and professional contexts.

AI Watermarking

Some AI companies are implementing watermarks—subtle patterns embedded in generated text that signal AI origin. These aren’t visible to readers but detectors can identify them.

The challenge: watermarks only work if AI creators implement them voluntarily. Open-source models and international competitors may not cooperate. Watermarking might work for ChatGPT and Claude but fails against dozens of alternatives.

The Arms Race Continues

As detectors improve, humanizers will adapt. As humanizers get better, detectors will evolve. This cycle has no natural end point.

The likely outcome: detection becomes increasingly unreliable as a sole verification method. Combining detection with provenance, human judgment, and process validation becomes necessary.

Final Verdict: What You Should Actually Use

After testing 10 tools systematically, here’s my honest recommendation.

Best Free Option: GPTZero

If you need free detection, GPTZero provides the best balance. The 10,000 monthly words cover most individual needs, and the false positive rate is acceptably low. It’s not perfect—accuracy on humanized content is poor—but for free, it’s hard to beat.

Best for Accuracy: Winston AI

If accuracy matters more than cost, Winston AI is the clear winner. Yes, it’s expensive. Yes, the interface is cluttered. But it catches AI content other tools miss while maintaining relatively low false positive rates. For high-stakes situations (academic integrity cases, legal disputes, publisher verification), the premium is justified.

Best for Publishers: Originality.ai

If you’re checking bulk content and prioritize catching all AI over avoiding false positives, Originality.ai’s aggressive approach works well. Just understand you’ll get more false alarms requiring human review.

Best for Students: QuillBot

The explicit design to avoid penalizing grammar tool usage makes QuillBot ideal for students using legitimate writing assistance. The three-category classification (AI / AI-refined / Human) better matches real-world usage than binary judgments.

My Personal Workflow

I use GPTZero for initial screening (free tier), verify anything suspicious with Winston AI (paid), and maintain writing process documentation as insurance against false accusations.

No single detector solves the problem. The technology has fundamental limitations that no vendor claims can overcome. Multiple tools plus human judgment remains the only reliable approach.

The uncomfortable truth: we’re asking these tools to solve an impossible problem. The line between AI and human writing is blurring, not sharpening. Expecting perfect detection ignores both technical limitations and the philosophical ambiguity of “AI-generated” in an era where AI assists most writing.

Use detectors as helpful signals. Don’t treat them as infallible judges. And always remember: a detector score doesn’t tell you whether writing represents original thinking—only whether it matches statistical patterns associated with AI.

Related Resources:

- How to Bypass AI Detection Ethically in 2026 – Legitimate techniques to ensure your human writing doesn’t trigger false positives.

- Do AI Detectors Actually Work? (Testing Results) – Deep dive into false positive rates and accuracy problems across all major tools.

- Free vs Paid AI Detectors: Which Is Worth It? – ROI analysis and detailed cost comparisons for individual and institutional users.

About AISEFUL.com

We test AI tools honestly without vendor relationships or affiliate bias. If you need help evaluating AI detection for your specific situation